Audio transcription can be a tiring, thorough, and tedious task. In fact, it is one of the most commonly outsourced services as it requires careful attention to detail to produce high-quality transcripts at a low overall cost. How do you get started in outsourcing audio transcription services? We show you how in this comprehensive guide.

What are audio transcription services?

Audio transcription services are the human tasks in converting speech in audio or speech data into a format that is recognizable to machines. Whether it’s an interview, conversation, voice command, or meeting notes, audio transcription services provide a textual representation of an audio or video file. They are useful in many voice-enabled applications, such as in-car navigation systems, virtual assistants, and more.

Audio transcription services are a great way to get your message across automated platforms. Transcribing audio files requires a lot of skill, so you must find a reliable company that can deliver quality work on time.

Types of Audio Transcription

There are many types of audio transcription services—as such, it’s essential to determine your project requirements before reaching out to a vendor. Here are just a few examples of audio transcription services:

Verbatim Transcription

Label audio and speech data with linguistic information on how sounds are pronounced in over 65+ languages.

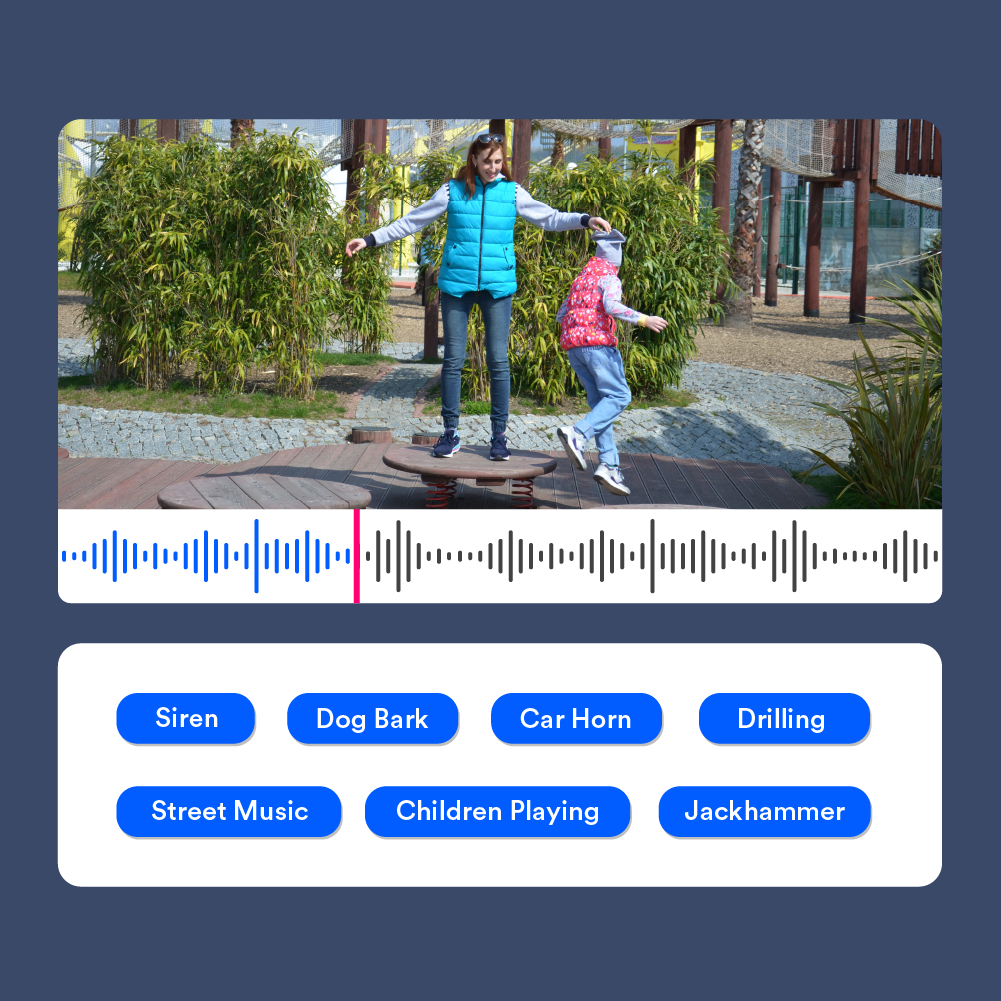

Audio Classification

Classify audio files into a set of predetermined categories based on your project specifications.

Acoustic Audio Collection

Collect diverse audio samples from a variety of environments, such as restaurants, schools, cars, offices, homes, and more.

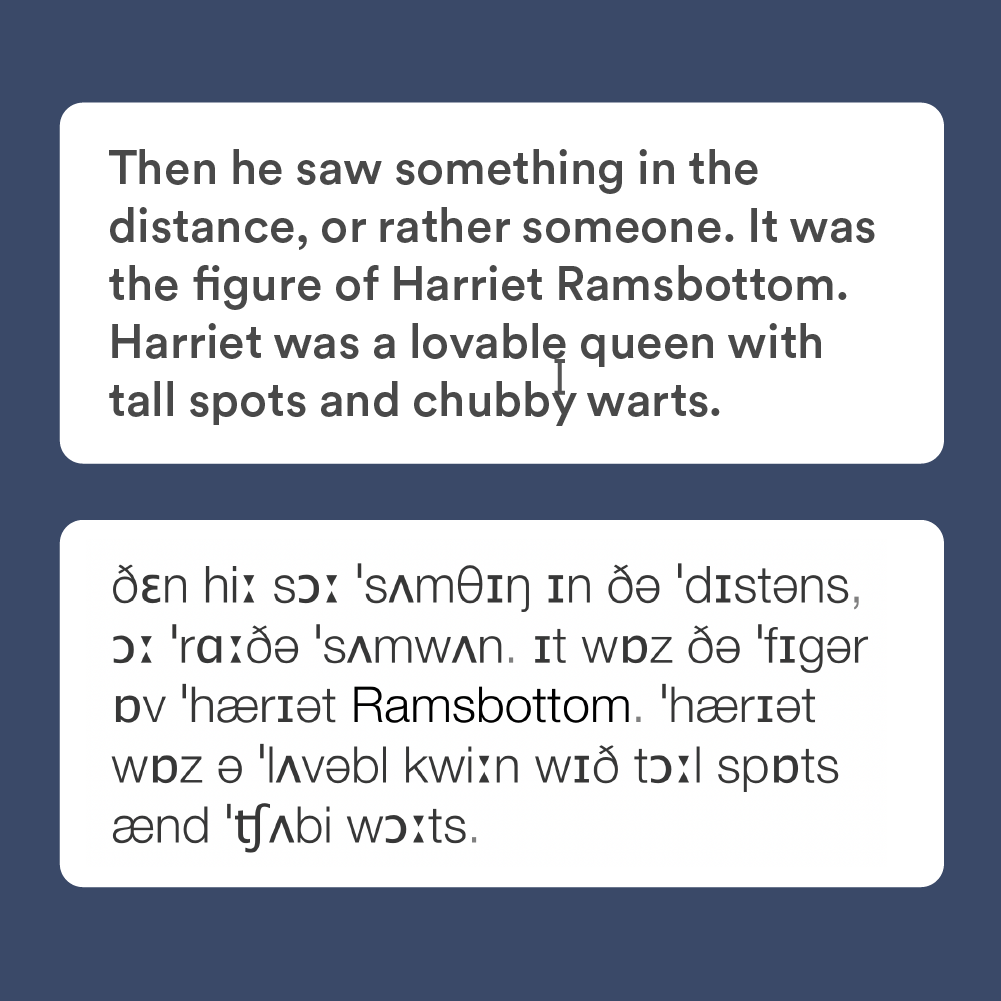

Phonetic Transcription

Label audio and speech data with linguistic information on how sounds are pronounced in over 65+ languages.

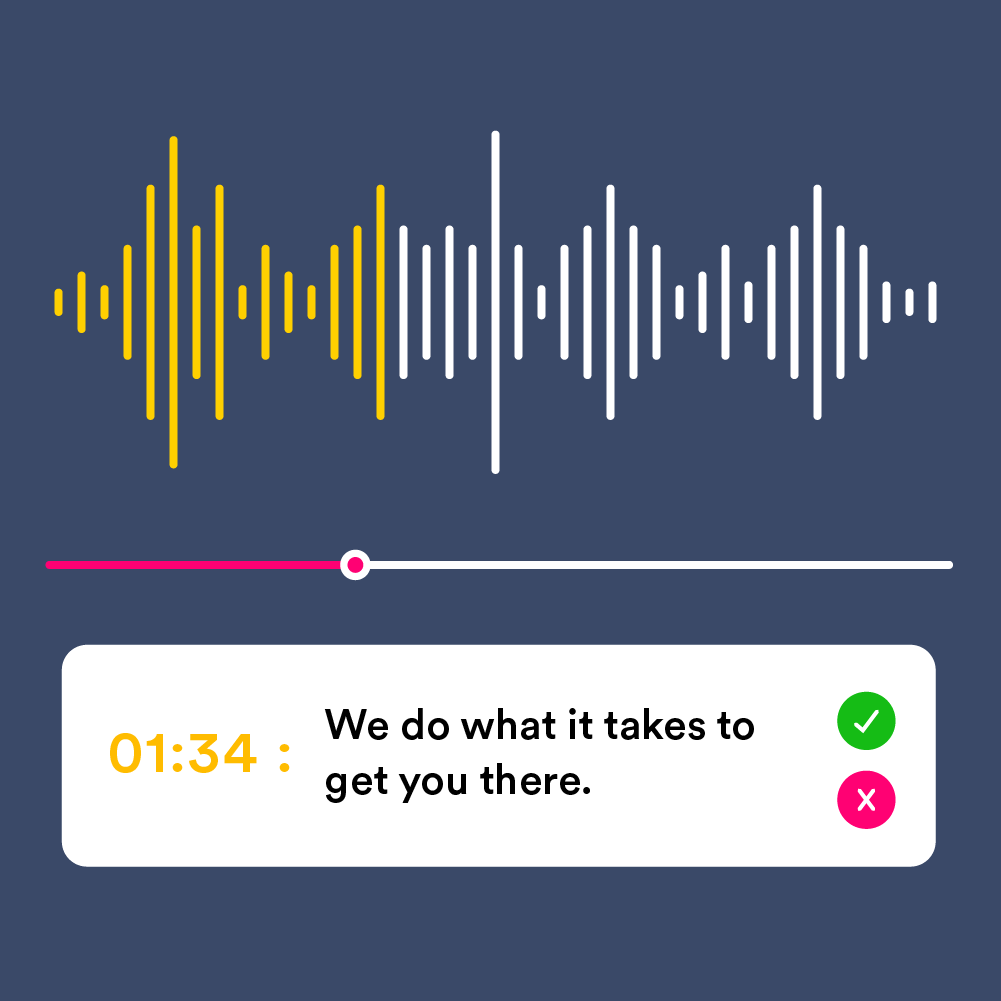

Audio Evaluation

Improve the quality and accuracy of machine-generated speech technology with assessments by real native speakers.

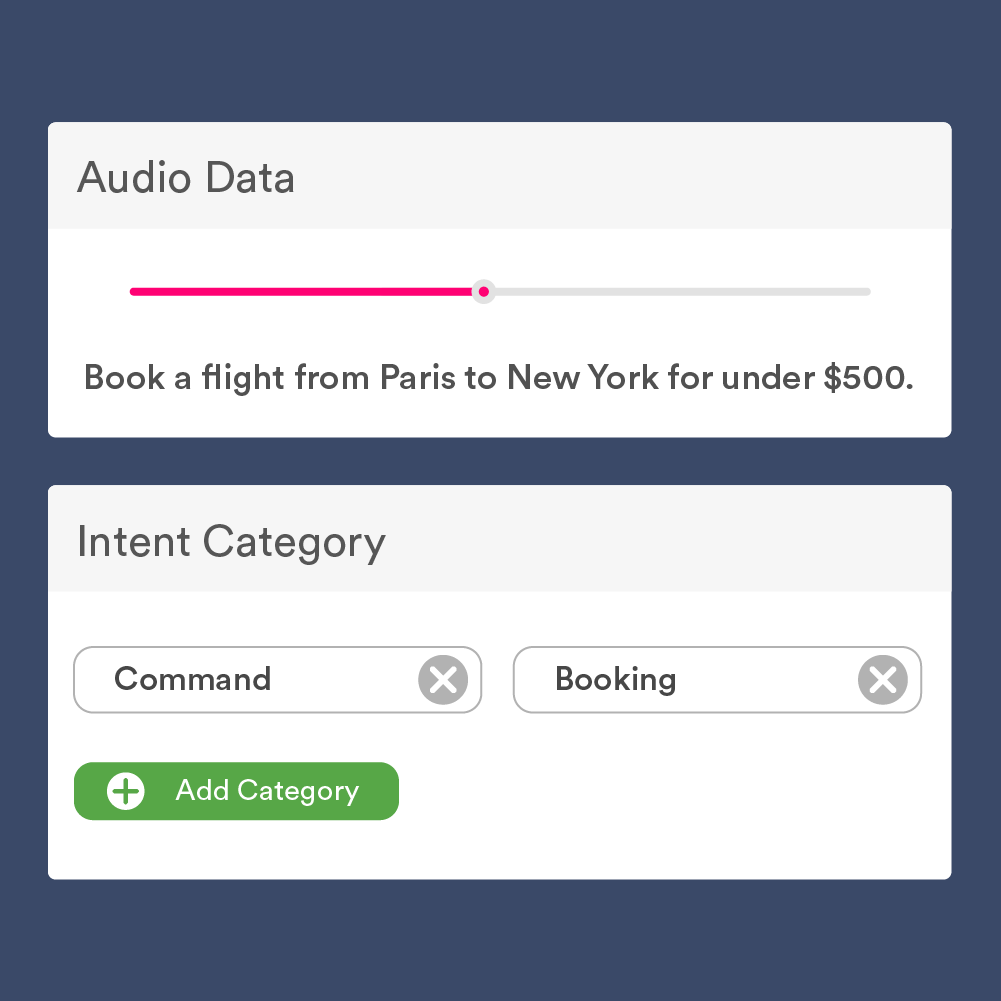

Intent Classification

Categorize audio or speech samples by intent to train chatbots, virtual assistants, and conversational AI.

Some of the many applications of audio transcription services include:

- Medical Transcription

- In the medical field, audio transcription services have proved valuable in enabling machine learning models to make precise predictions concerning medical diagnoses, doctors’ notes, patient interviews, and other critical data. These transcriptions can further be utilized in medical research and better organization of patient records.

- Speech-to-Text Assistants

- Language translation models can be trained effectively by using transcribed audio data. By transcribing speech data into written text, machine learning models can learn language patterns and provide precise language translations for applications in various industries such as education, media, broadcasting, and more.

- Call Center Analytics

- Audio transcription is also beneficial for VoC (voice of the customer) analysis. It allows machine learning models to gain insights into customer behavior, sentiment, and call trends by going through call center interactions. This allows businesses to enhance their customer experience and make data-driven decisions.

- Legal Transcriptions

- Another vital application of audio transcription services is in court proceedings and law enforcement investigations. These prove helpful in streamlining legal documentation, facilitating case analysis, and ensuring regulatory compliance. By transcribing audio content, these services aid in creating accurate records that assist legal professionals and investigators in their essential work.

Benefits of outsourcing audio transcription projects

The ability to produce thousands of high-quality transcripts in a short amount of time is only one of the advantages of outsourcing audio transcription services. More importantly, third-party service providers are better equipped to secure your data and handle large volumes of data.

- Professional Transcriptionists

- Outsourcing can allow access to teams of expert transcribers with subject matter expertise (e.g., legal or medical) or multilingual capabilities. In addition to reducing the overhead costs of sourcing these transcriptionists, it ensures that only the most qualified people are working on your project.

- Data Security

- When outsourcing transcription projects, you need confidence that your data is secure, particularly when your datasets involve PII, financial records, or other sensitive data. Partnering with an established partner compliant with industry standards can help minimize risk. Some examples include ISO standards, HIPAA for the health industry, and PCI for online retail and fintech.

- Scalability

- Outsourcing audio transcription work to a third party allows you to scale volume to accommodate fluctuations in demand. Unlike in-house teams, an experienced transcription partner can easily tackle an unexpected, large volume of work.

Many organizations can benefit from outsourcing audio transcription services. It is vital to partner with an experienced profile to make the most out of these benefits.

Choosing a reliable audio transcription company

So, what should you look for in an audio transcription service provider? Well, there are many things to consider.

- Accuracy

- This is the most crucial factor when choosing an audio transcription service provider. If you want your content to be accurately translated, ensure they have good skills and expertise in this area.

- Find out if the company has a track record of getting the job done and sticking with it. You should also ask about their skills and resources: are they experienced in this specific field? Do they have all the tools to deliver accurately and efficiently?

- Turnaround time

- You need to ensure that your audio transcripts will be delivered on time by setting a reasonable timeline and ensuring that turnaround times are not too long or too short for your project needs.

- Cost

- You should also check the cost of audio transcription services provided by the transcription service provider. Make sure they offer various service options that fit your budget and add more value to your business.

- Quality

- The quality of the audio transcription service is also important to ensure that you get the right results. You should look for a transcription service provider with a good reputation in this area to ensure that they will deliver high-quality content.

When looking for a transcription service, remember to do your research. You want to make sure that the company you choose can handle all of the requirements of your project and also has notable experience and expertise in the field of audio transcription.

Outsource Data Annotation Services to TaskUs

As the world’s fastest-growing business process outsourcing company recognized by Everest Group, we utilize a unique approach to data annotation that combines human intelligence with advanced machine learning technologies. Our expert teams of data annotators are best-in-class and can process a wide range of different types of data.

TaskUs provides high-quality audio transcription services in all major languages and file formats. With access to a talented, diverse, and global workforce, we can quickly recruit, train, and manage teams of any size to transcribe audio into a structured format specific to your requirements.

With our expertise and people-first philosophy, we have a proven track record of delivering high-quality audio data annotation services to one of the world’s top tech companies. We can handle projects of any size and deliver results that consistently meet or exceed our client’s expectations.

Through our collaboration with their team, we’ve been able to support a total of 30 international programs, all while delivering Ridiculously Good Results.

10 million items tagged weekly

A staggering 91.7% accuracy rate

New Automated Speech Recognition lines of business in the next two years