- What is Image Annotation?

- Benefits of Image Annotation for Machine Learning

- How Does Image Annotation Work?

- What are Image Annotation Best Practices?

- What are Common Image Annotation Service Types?

- What are Image Annotation Challenges?

- Case Study: Image Annotation for a Global Leading Autonomous Vehicle Company

- Outsource Image Annotation Service with Us

Machines can’t distinguish objects as well as humans do. To make computers “see” images better, we annotate important elements to improve accuracy.

We examine image annotation and how it helps develop effective computer vision applications for various industries.

What is Image Annotation?

Image annotation or image labeling is the process of attaching descriptive tags or labels to specific elements within an image to help computers understand and interpret the image’s content. This process enables machine applications to identify objects in real-life situations and provides additional context and information about the scene (e.g., what actions, qualities, or behaviors the objects show).

Tech-enabled companies offer image annotation services to their clients to best quantify, organize, and label their images for use in artificial intelligence (AI) and machine learning development. It’s increasingly becoming a lucrative service, all thanks to the rapid tech advancement of machine learning.[1]

Benefits of Image Annotation for Machine Learning

Labeling images is critical to training machine learning algorithms and developing computer vision systems in various applications and industries. For example, this service can be used to:

- Retail + eCommerce: Train algorithms to identify and categorize products in online retail stores.

- Healthcare: Assists in medical diagnoses, treatment planning, medical robotics, image analyses, and precision medicine

- Autonomous Vehicles: Helps self-driving cars navigate roads safely and effectively

- Security: Identify and track individuals and objects in security and surveillance systems

- Financial Services: Teaches AI to detect and flag fraudulent patterns in documentation, verification, and customer behavior

- Social Media: Refines alternate reality (AR) and virtual reality (VR) experiences by improving virtual object recognition and user interaction

Without accurate and reliable image labels to guide computer vision systems, applications would not be able to function as intended.

Thus, the demand for annotation services is increasing due to these key reasons:

- Enables computers to understand and interpret images:

- This service helps identify the objects, people, and actions depicted in an image and provides additional context and information about the scene. This information helps computer vision systems better understand an image’s meaning and significance.

- Helps to train algorithms:

- Labeled images serve as training data to teach algorithms to recognize and classify objects, people, and actions in images and videos. Machines can learn to identify similar elements in real-life situations by providing accurate and reliable labels for numerous images.

- Improves the accuracy and effectiveness of computer vision systems:

- Accurate and reliable annotations are essential for deploying computer vision systems in real-world applications. Without them, the systems may be unable to accurately recognize and classify objects and actions, potentially leading to dangerous errors.

A machine learning algorithm aims to recognize elements in an image by itself, but training such a model requires a large set of pre-labeled image data. We must prepare the information with meticulous attention to detail to ensure efficient algorithm performance.

How Does Image Annotation Work?

Teaching a machine to recognize and understand images is a gradual process, much like how a newborn child learns to see and make sense of the world. Image annotation plays a crucial role in developing computer vision systems, as it helps to provide the foundational tool for their ability to perceive and recognize objects in visual data. Here’s how:

- Collect Images: The process begins with gathering diverse images that resemble the scenarios the AI will encounter. The dataset should be large and varied to cover as many situations as possible.

- Choose Tools: The next step is selecting the right annotation tools, ranging from simple geometric shapes to detailed pixel-level markers. The selection depends on how granular the AI’s understanding needs to be.

- Annotate Images: Image annotation involves going through each image and marking different objects with descriptive labels such as “cat” for a cat and “tree” for a tree. This step names and outlines the shape or position of each item within the image.

- Check Labels: After the initial labeling, each annotated image is reviewed to ensure that markers are accurate and consistently applied, factors that are crucial for reliable AI learning.

- Train AI: Once the annotation is complete, the dataset will train the AI to recognize objects and patterns independently when analyzing new images.

While meticulous, annotating images is crucial as it translates the complexity of the visual world into a format that machines can understand and interact with.

What are Image Annotation Best Practices?

To make the most out of the process, here are some best practices to look out for with your team of engineers:

- Start small: Begin with a small number of images. This can help the team establish clear guidelines and standards for labeling and provide the opportunity to establish any feedback or adjustments before proceeding with the full dataset.

- Remember: quantity, quality, diversity: Ensure that your team has access to a sufficient and diverse number of images for the model to recognize a wide range of objects, poses, and conditions. For example, if your target is to label images of cars, it would be good to include images of vehicles from different manufacturers in different colors and various lighting conditions, image resolutions, etc.

- Conduct thorough quality checks: This ensures the labeled dataset is accurate and reliable. This process may involve manually reviewing the labels to check for errors or inconsistencies and testing the model on a small subset of the dataset to ensure it performs well.

- Quality still wins over quantity: Remember to exclude blurry images or lack appropriate visual information, which will hurt the model’s performance. Some images may contain misleading or confusing features that could interfere with the training process quality, leading to incorrect or inconsistent results.

By adopting these practices, teams can refine their annotation efforts to improve their machine learning models.

What are Common Image Annotation Service Types?

Image annotation companies categorize types of annotation services to assist clients in choosing the service that they need. Here are a few examples of image annotation types:

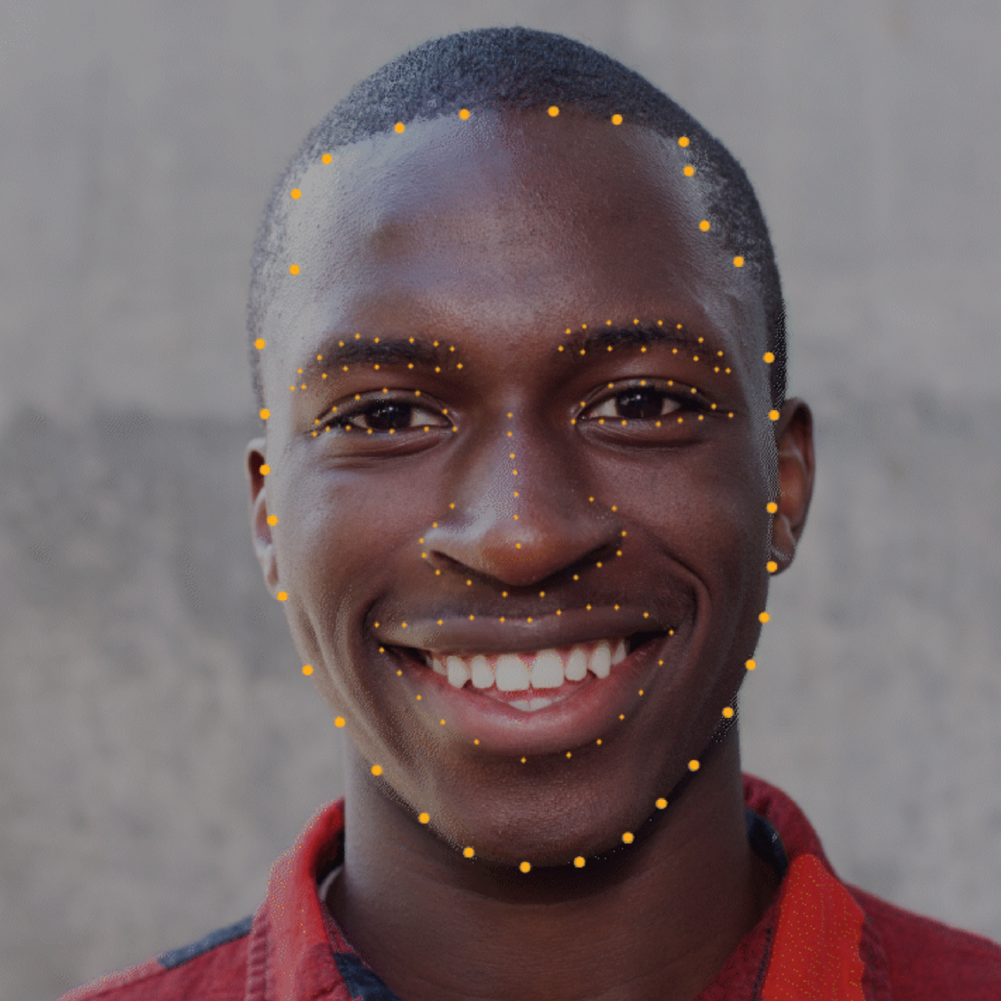

Key Point Annotation: Also known as pose and landmark recognition, this annotation type lets models capture the finest details in small objects and shapes. Experts use key points to connect the object and track its movement. This service is commonly used in AI facial recognition, posture recognition for alternative (AR) and virtual reality (VR) applications, sign language transcription, and even robotic-assisted surgery.

Bounding Boxes: This uses digital rectangles to determine object position with x and y coordinates. Bounding boxes are commonly used in object determination for self-driving cars, auto-tagging products for optimizing the eCommerce search experience, image detection for drone imagery, and even monitoring plant growth on agricultural farms.

Polygon Annotation: Modern image labeling services handle irregularly formed images and objects with highly flexible polygons for a more accurate depiction. Polygon annotation is commonly used for aerial mapping views of natural and artificial bodies of water, sidewalks, road edges, and more. In medical technology, this type supports outlining internal organs in CT scans.

Image Classification: Image classification involves assigning an image to one or more predefined categories or classes. For example, a model might be trained to recognize and classify different types of animals, such as dogs, cats, or birds. With this type, the model will have to be trained based on a large dataset of labeled images of animals (e.g., “dog,” “cat,” “bird”). The algorithm would then learn to recognize the features and patterns of each labeled class (in this case, animal), such as its body shape, face, and more.

Image Segmentation: An image is usually divided into multiple segments or regions—background and object, for example. Dividing an image requires the algorithm to analyze it and identify the boundaries and edges of the different objects pixel by pixel. It then assigns each pixel in the image to a specific object or background class, creating a map or mask showing the different objects and their relationships.

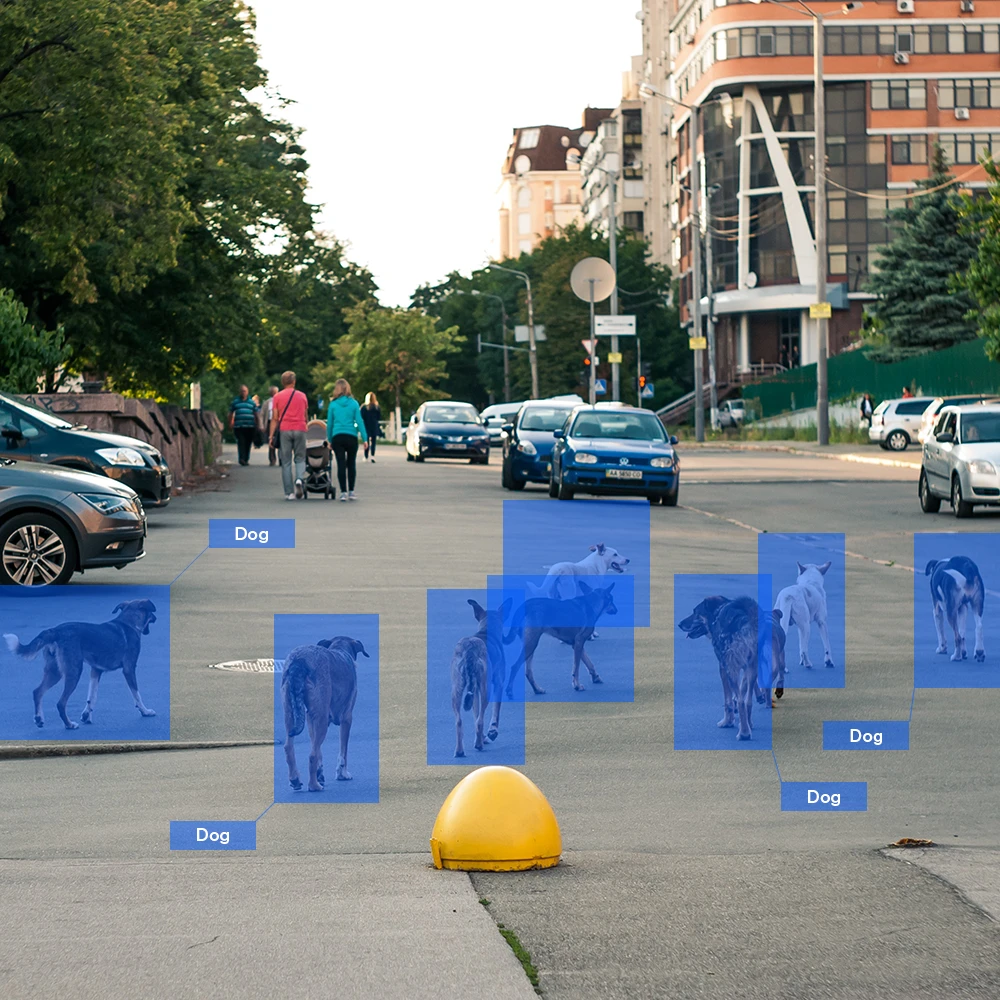

Object Detection: This is a form of image annotation used to identify, locate, and count the number of objects in an image and then label each one correctly. Object detection is typically done by providing the model with a large dataset of labeled images, where each image includes the bounding boxes or regions that enclose the objects of interest.

Pose Estimation: This type estimates the position and orientation of an object or person within an image. It is used in various applications, such as augmented reality, robotics, and sports analysis. It allows computers to understand the spatial relationships between objects and the environment and can be used to track the movement and behavior of objects over time.

What are Image Annotation Challenges?

An image annotation process requires handling a large volume of data with high accuracy and speed, and it usually comes with its own set of internal and external complexities.

Here are some of the common challenges faced during image annotation:

- Workforce: Any image annotation project needs an experienced team of annotators who can handle a vast amount of data and efficiently perform annotations. Multiple quality checks might be required depending on the project’s needs, which can increase the team’s burden and further impact their productivity.

- Annotation Tools: Appropriate labeling tools and software are crucial for the success of any annotation project. Unfortunately, these tools and technology usually come with a high price tag, which many businesses overlook in an attempt to cut costs and stay within budget.

- Quality Data: A machine learning model can only be as good as the AI data it’s trained with. However, obtaining such datasets for annotation projects can be challenging and expensive.

Due to these challenges, many companies rely on image annotation outsourcing to reliable service providers who can ensure efficiency and accuracy. Outsourcing offers several benefits, including access to specialized skills, faster turnaround times, and competitive image annotation pricing. This makes it a favorable option for organizations that require high-quality labeled datasets.

Case Study: Image Annotation for a Global Leading Autonomous Vehicle Company

A leading US-based autonomous vehicle company recognized the benefits of outsourcing and decided to partner with TaskUs for our AI services and training data capabilities. This company chose Us Us to scale, refine, and enhance AI training through high-precision data.

We developed a quality management framework for each dataset’s workflow, including a calibration and continuous feedback loop, quality parameters, and scoring guidelines, to ensure that the resulting image annotation work was thorough and precise.

Our work resulted in:

- 1,800 Full-time equivalents (FTE) vs initial 100-FTE project

- 24 daily individual tasks vs 20 target

- 98% accuracy score vs 90% target

- 115% overall production rate vs 90% target

Outsource Image Annotation Service with Us

TaskUs is the premiere choice for leading companies across industries looking beyond asking, “What is image annotation?” Our dedication to quality management, use of adaptable labeling tools, project management proficiency, and stringent data security measures sets Us apart as the standard for excellence in the field.

Our successful approach involves unlocking human excellence through AI. By investing in a people-first culture and cutting-edge tailored solutions, we help you create effective AI systems, exceed customer expectations, and reduce costs.

Choose a proven partner for your Image Annotation. Choose Us.