Data is the backbone of any machine learning model. For its success, it is essential to train the machine with high-quality data–tons of it. Any data scientist will agree that gathering too much data is better than having too little, especially for computer vision applications that rely on gathering visual data in the form of images and videos. This is why data collection is a crucial step in the life cycle of a machine learning model.

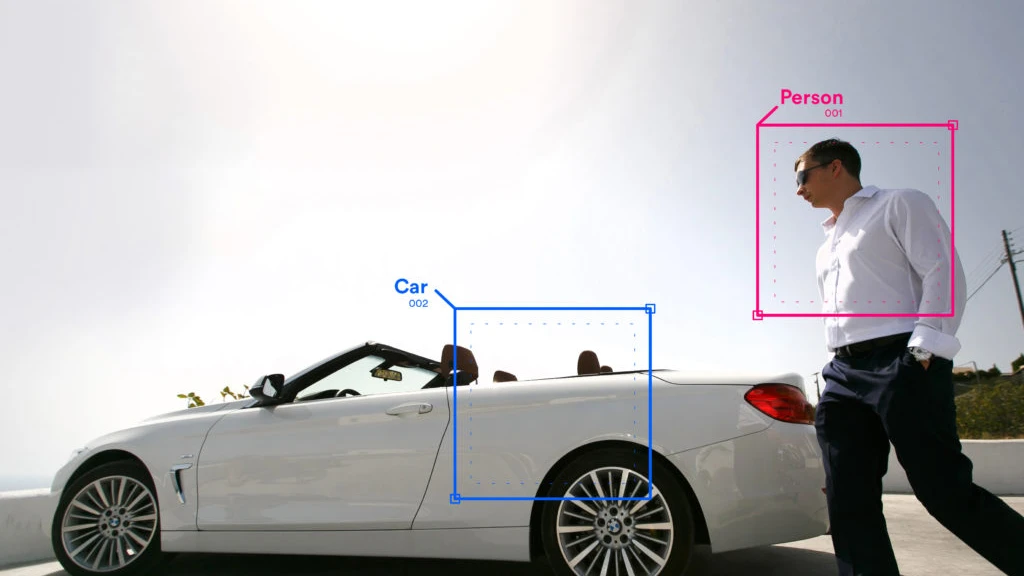

Video data collection is an invaluable element of machine learning to train computers to actually ‘see’ and perform their tasks. Massive amounts of various data formats such as images, video, and speech, are collected and annotated to allow artificial intelligence (AI) and machine learning models to become smarter, more accurate, and more proficient in recognizing all the aforementioned content.

Starting a computer vision project? A good first step is to better understand the importance of video data collection, the collection process, and the quality standards that must be met—all of which will be discussed in this article.

What is video data collection?

Video data collection is the process of capturing video data. It can be done manually, via handheld devices like phones and cameras, with the data uploaded through a data collection platform. For more streamlined and efficient processing, video data collection can be automated or streamlined by using in-production devices or gleamed through existing data sources (e.g. security cameras, car dashboard cameras, etc).

Video data collection helps form the basis for models to perform various types of actions such as facial recognition, object tracking, scene recognition, and more data you collect, the better and more accurate it will be.

The datasets required for video annotation projects should include diverse representations such as demographics, lighting conditions, and background noise, among others to enable a machine learning model to reduce the risk of bias.

Why is video data collection essential in machine learning?

In 2021, the global data collection market was valued at $1.66 billion—and along with the spike in the use of data collection comes a growing need to collect large amounts of high-quality data. The increase in users of autonomous vehicles, augmented and virtual reality, drones, cameras, and other gadgets actively contribute to the demand for video data collection and video annotation.

Autonomous vehicles

The goal of video data collection for autonomous vehicles is to train AI models by taking thousands of hours of footage, which are then used to develop an algorithm that can recognize objects in real-time. The process involves a combination of human expertise and machine intelligence.

For example, if you want your self-driving car to recognize pedestrians on its own, you’ll need data from different angles so the model can distinguish which objects are people and which ones aren’t. By using this data, specialists can train the machine to identify humans from everyday objects, understand traffic policies, avoid potential accidents, and reach its destination safely.

Augmented reality (AR) and virtual reality (VR)

AR and VR are popular, especially in the gaming and entertainment industry, but it’s only now that they are reaching their full potential. Today, businesses are venturing into VR to train new employees and create immersive marketing experiences.

On the other hand, AR apps are already being used by consumers on their phones, with more apps becoming available every single day in this space. As more people buy these devices and as more apps integrate the use of AR and VR, the amount of video data collection necessary will increase exponentially over time.

Retail technology

Retail technology has become essential in providing end-to-end automation solutions for store operations. The actionable data that these solutions generate allow our client’s customers to create better and more efficient stores, lower their costs, and increase their profitability.

Another common use of video data in retail tech is theft monitoring and risk assessment. Retail ML models can be built to mitigate the risk of merchandise being stolen by bad actors or suspicious baggage left unattended.

How to collect video data for computer vision

The secret sauce to a successful computer vision project is high-quality video data. High quality video data is required to train computer vision models to identify certain features or characteristics so machines can make accurate predictions and actions in production environments.

There are different ways to collect high-quality video data:

- Open source datasets

- Since these datasets are hosted on shared repositories, it’s easier for companies to obtain the data they need for data and video annotation or video data collection.

- Web scraping

- This is the process of using AI or bots to obtain data and content from websites. It’s important to make sure you have the rights to the data you collect.

- Synthetic dataset

- Synthetic datasets are generated to test and train machine learning models such in the case of self-driving vehicles, and to generate large amounts of certain types of data for instance using video game engines.

- Manual dataset generation

- This approach is by far the most tried and tested way of collecting data. You can collect the data you will need by conducting surveys or crowdsourcing. Obtaining data via crowdsourcing can lead to more diverse and unbiased results, compared to instance synthetic data.

Data scientists and machine learning researchers have a myriad of options when it comes to building training datasets. Especially for machine learning models that require image-based data, video data collection is a great way to obtain the necessary training datasets. Choosing the right video data collection process according to your project needs is imperative to ensure your model’s success.

Outsourcing Video Data Collection with Us

As a company that leverages the power of next-generation technology, we equip our employees with tools and expertise to provide ridiculously good results to our clients and customers.

One of our projects requires the collection of high-quality and distinct data samples to train our Client’s AI. To obtain diverse and unbiased data, we’ve launched a crowdsourced video annotation and data collection project on TaskVerse. As a result, we collected an accurate representation of 25,000 data points across the demographics of 9 ethnic groups from 6 countries, with varying age groups and genders.