Content moderation is essential for maintaining credible and positive online environments in the growing user-generated platform market. As the volume of user-generated content increases, many businesses outsource content moderation to ensure a safe and secure online atmosphere.

This article explores the best practices for effective content moderation and highlights its various benefits. By implementing effective content moderation outsourcing, businesses effectively navigate the complexity of user-generated content and create safer and more meaningful online experiences.

What is Content Moderation Outsourcing?

Content moderation outsourcing involves partnering with third-party providers to manage user-generated content, such as text, images, and videos, across digital platforms. Outsourcing this function helps keep content quality and appropriateness in check.

Outsourcing provides an efficient solution by allowing experienced moderators who can quickly evaluate content according to established guidelines and cultural nuances. This approach also guarantees scalability to manage variations in content volume without overburdening internal teams. Furthermore, outsourcing improves cost-effectiveness by removing the necessity to hire and oversee an in-house workforce.

Overall, content moderation outsourcing is an efficient way to manage user-generated content. It ensures adherence to guidelines, promotes a positive online environment, and allows companies to focus on core business initiatives. The following sections will dive deeper into content moderation outsourcing, including its advantages, difficulties, and recommended approaches.

Embracing the Benefits of Content Moderation Outsourcing

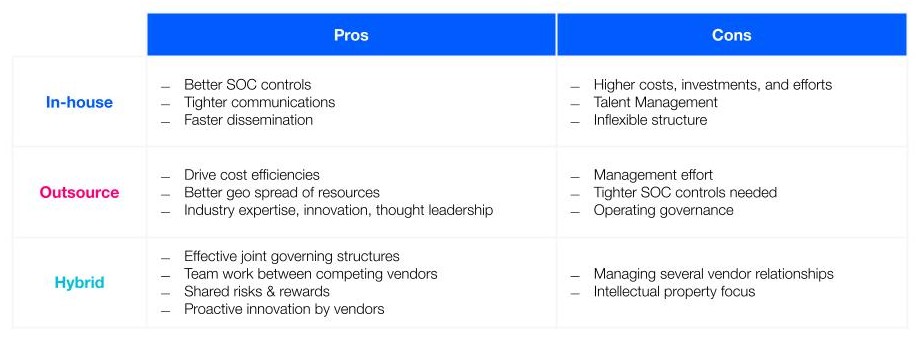

Content moderation outsourcing offers several key and compelling benefits as opposed to moderating using internal teams. Here’s a table that breaks down the pros and cons of both:

In addition to the aforementioned benefits, outsourcing with a trusted vendor can help improve the following factors:

Scalability

Outsourcing providers can usually handle fluctuations in content volume on digital platforms. It eliminates the need for in-house recruitment and training during high-demand periods while avoiding unnecessary overhead costs during lean seasons. Dynamic scalability ensures content quality remains high regardless of user volume and engagement.

Cost Efficiency

Content moderation outsourcing is oftentimes more cost-effective than building an internal team. It avoids the expenses of recruitment, training, and retention, allowing businesses to focus on core operations. This approach streamlines budgets and provides cost predictability with transparent pricing models.

Round-the-Clock Coverage

Outsourcing content moderation to global providers ensures 24/7 coverage across different time zones. This guarantees a consistent user experience, builds trust, and creates a safer online environment. Businesses can quickly address and resolve potential problems with a dedicated team always monitoring content.

Best Practices When Outsourcing Content Moderation

Having clear and consistent content moderation guidelines, ongoing training, and regular evaluations of moderator performance are crucial for quality control. This ensures that moderation decisions align with your brand values and platform standards.

Cultivating cultural sensitivity is also important in content moderation outsourcing. User-generated content stems from diverse cultural backgrounds, necessitating moderators’ ability to discern and respect cultural nuances. Adequate training and sensitivity guidelines prevent cultural misunderstandings or potential biases in content assessment.

When outsourcing content moderation, businesses must prioritize data privacy and security. With personal and sensitive information stored and published online, it’s vital to implement strong protocols that protect user data, comply with regulations, and prevent unauthorized access and misuse.

Safeguard Online Spaces with Us

Content moderation poses many challenges due to the scale of modern platforms and constantly changing trends. Therefore, finding effective solutions that balance efficiency, expertise, and flexibility is essential.

At TaskUs, we understand the growing need for Trust + Safety services–which is why we are committed to creating the most secure online environment possible, while still allowing users to express themselves safely and with digital civility.

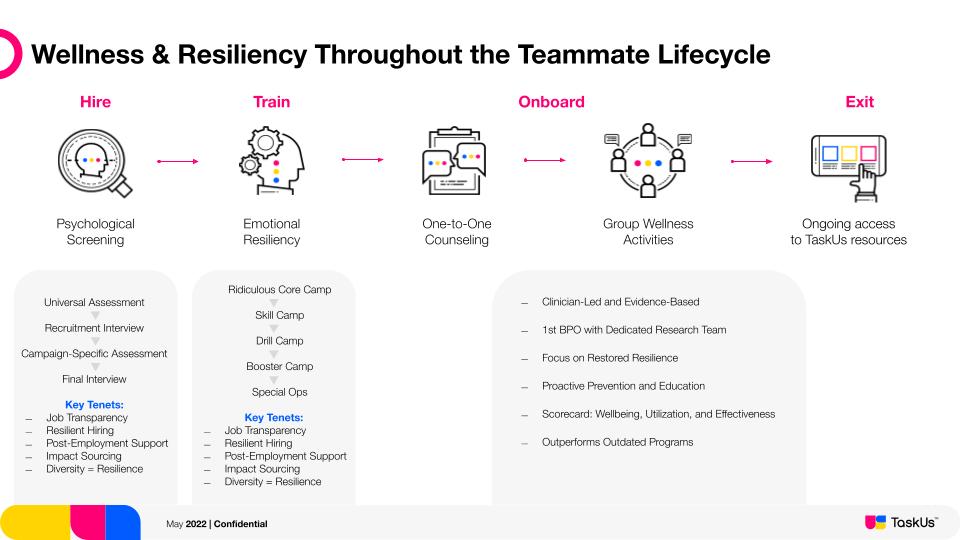

We have deep experience building robust content moderation infrastructures for our clients, as well as our world-class Wellness & Resiliency program.

From customized workflows that align with your brand’s values to a globally distributed team proficient in diverse languages and cultures, TaskUs offers a unique blend of expertise in safety in outsourcing content moderation.