The European Union is considered a champion in regulating digital giants. Having established game-changing initiatives such as the Right to Be Forgotten (RTBF) and the General Data Protection Regulation (GDPR), the region is a trailblazer for countries worldwide seeking to regulate the digital landscape.

Today, the EU is once again taking the lead by establishing new rules for big tech players with the Digital Services Act (DSA).

What is the Digital Services Act?

The Digital Services Act (DSA) is legislation introduced by the European Commission that will establish:

- new rules for online platforms, including requirements for transparency, accountability, and responsibility for the content that they host or promote.

- new obligations to prevent the dissemination of illegal content such as hate speech, terrorist content, and child sexual abuse material.

- the need for online platforms to invest in more robust content moderation and removal systems as systematic failure will be sanctioned.

Furthermore, the Digital Services Act will enable the possibility to challenge platforms’ content moderation decisions through a more effective appeal process, which also constitutes a risk of volume spikes. Our Trust + Safety department works tirelessly to moderate this sudden influx of content.

Who is impacted?

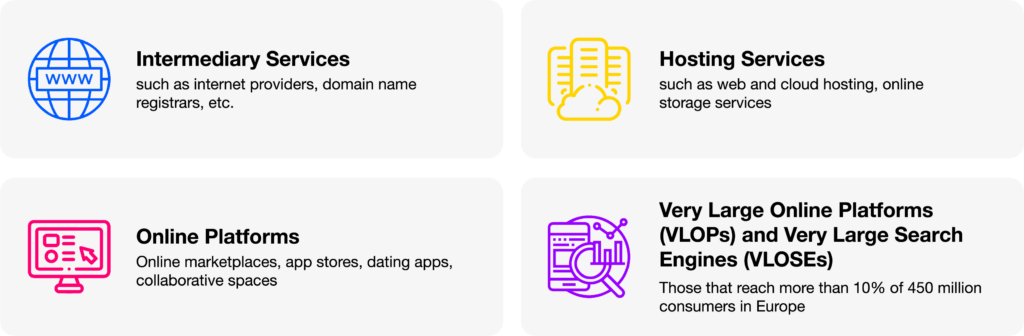

With companies—irrespective of whether they are located inside or outside the EU—the DSA rules will differ based on their role, size, and impact in an online ecosystem:

What are the impacts of the Digital Service Act?

Millions of European citizens using online services, platforms, and apps daily will benefit from the DSA. Not only will the Act plan strict regulations to ensure that companies will provide a safer online experience, but it will also push companies to have better social media support and wellness teams on standby.

The Digital Services Act (DSA) has the potential to have significant impacts on the online ecosystem in the European Union (EU) and beyond. In case of non-compliance, companies can face:

The DSA is expected to have an impact beyond the EU, as many online platforms operate globally. Therefore its provisions could set a new standard for digital regulation and may influence other countries to adopt similar measures.

Overall, the DSA represents a significant shift in the way that online platforms are regulated, and it has the potential to improve the safety, transparency, and fairness of the online ecosystem. However, its implementation and enforcement will be crucial in determining its actual impact

What is the goal of the Digital Services Act?

The goal of the DSA is to regulate the online space, particularly large online platforms and search engines, to make it safer for users, protect their fundamental rights, and ensure fair competition. EU citizens can benefit from the Digital Services Act in different ways:

- Less exposure to illegal content

- Better protection of fundamental rights and online protection for minors

- Prevention of manipulation or disinformation

- Implement clear expectations for handling harmful and illegal online content and its mechanisms

- Establish a transparency and accountability framework to ensure that human + AI moderation activities are fair and transparent

- Rebalance the responsibilities between users, platforms, and public authorities to allow users to challenge content moderation decisions

- Institute collaboration between platforms, trusted flaggers, and EU authorities

- Launch independent audits and assess VLOPS’ risk management systems

How TaskUs Can Help?

Recognized by Everest Group as the world’s fastest-growing Business Process service provider in 2022 and reviewed highly in the Gartner Peer Insights Review, TaskUs is continuously enhancing ways to curate safe digital spaces for all—including working with ridiculously brilliant minds.

Through our robust end-to-end solutions and exceptional human + tech capabilities, you can count on Us to help you:

- Scale and provide workforce across different countries and time zones.

- Provide an experienced team to test the implementation of pilot projects.

- Structure escalation teams (tiers) based on the level of nuance and difficulty of the work.

- Create a clear process and channels of communication to enforce the EU requirements on existing policy guidelines and reporting flows.

- Provide wellness programs and psychological support to the team handling sensitive content.

- Spot and flag cultural trends on societal issues and provide solutions to tackle them to avoid systematic risks and failure.

With the DSA’s full implementation approaching, companies must act fast to understand their roles and responsibilities under the new regulations. By doing so, they can create a safer and more secure online environment for their users.