Scaling Global Ground Truth for Consumer Robotics

Diverse egocentric data capture eliminates AI bias and ensures real-world reliability

RESULTS

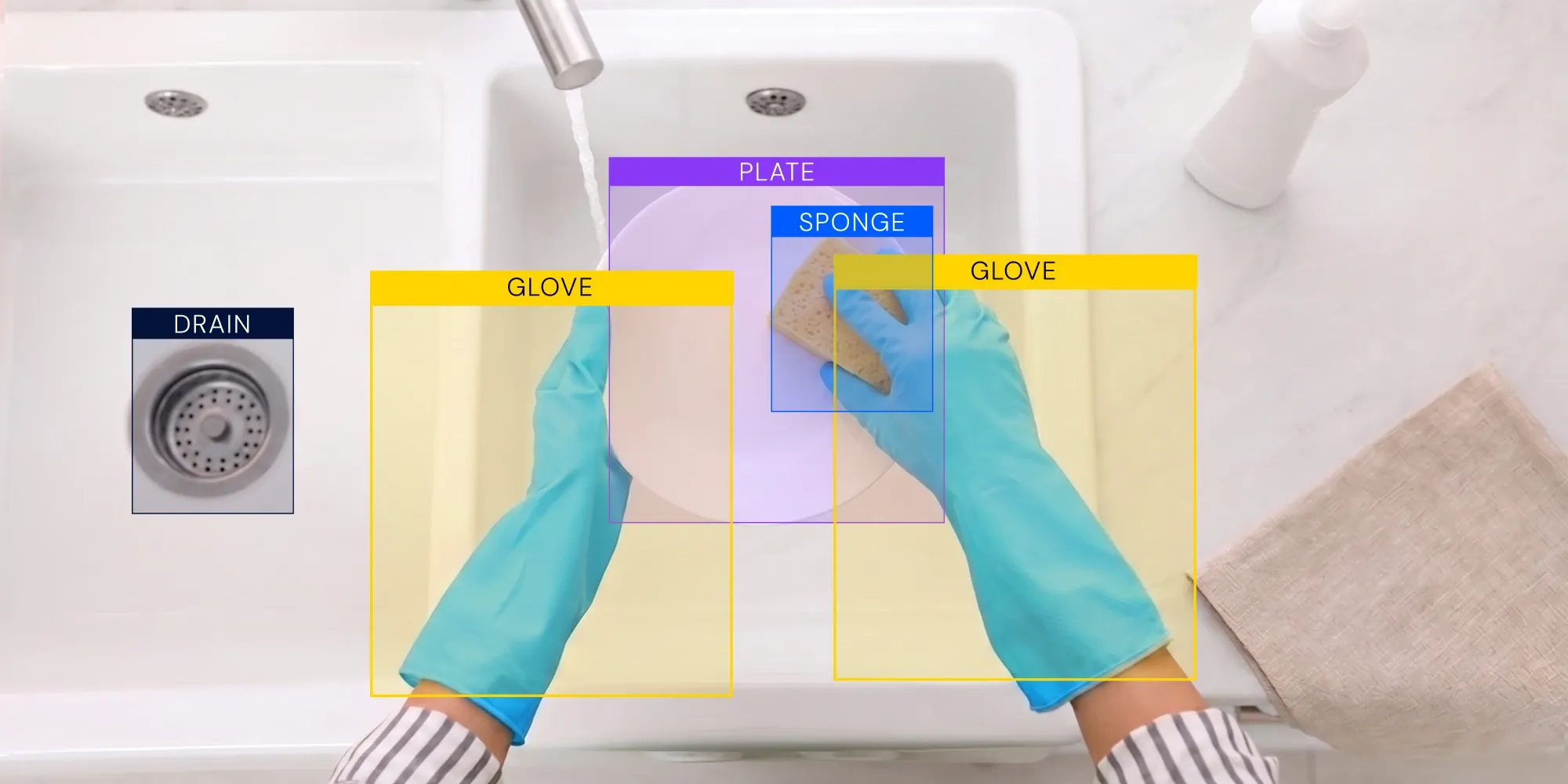

Uncontrolled environments reveal the limits of physical AI. Human perspective data makes it work.

While robots excel in predictable environments, a typical home kitchen is a different story. Shifting sunlight, reflective surfaces and cluttered countertops create spatial computing confusion. To get a robot to work reliably, AI needs to see the world exactly as humans do.

But getting that training data at scale is a massive hurdle.

We helped a leading tech giant to build a diverse, egocentric dataset to solve this. The project goal was to gather enough real-world video to ensure the model wouldn’t glitch or show demographic bias when it reached a consumer’s home.

How to build global ground truth

For global sourcing, we leveraged our TaskVerse platform and quickly tapped in 200 participants across six countries. By sourcing from different types of homes and cultures, we made sure the model could recognize objects in any kitchen, anywhere, effectively neutralizing bias from the very start.

Participants recorded their spaces in 1080p from a first-person point of view to ensure real-world perspective. This ground truth data mimics exactly what the robot’s sensors see, which is the only way to train for the messiness of real life.

Every video went through a multi-stage quality check for lighting, resolution and visibility. This filtered out low-quality noise, ensuring only high-fidelity data entered the training pipeline.

Bringing robots to life

Ultimately, this project demonstrated that, with a smart enough data strategy, you can achieve AI order from real-world chaos. This solid foundation of ground truth ensures a robot is ready for what you can’t simulate in a lab: everyday life.

Results

25K

100%

6

Connect with a TaskUs Expert

Services